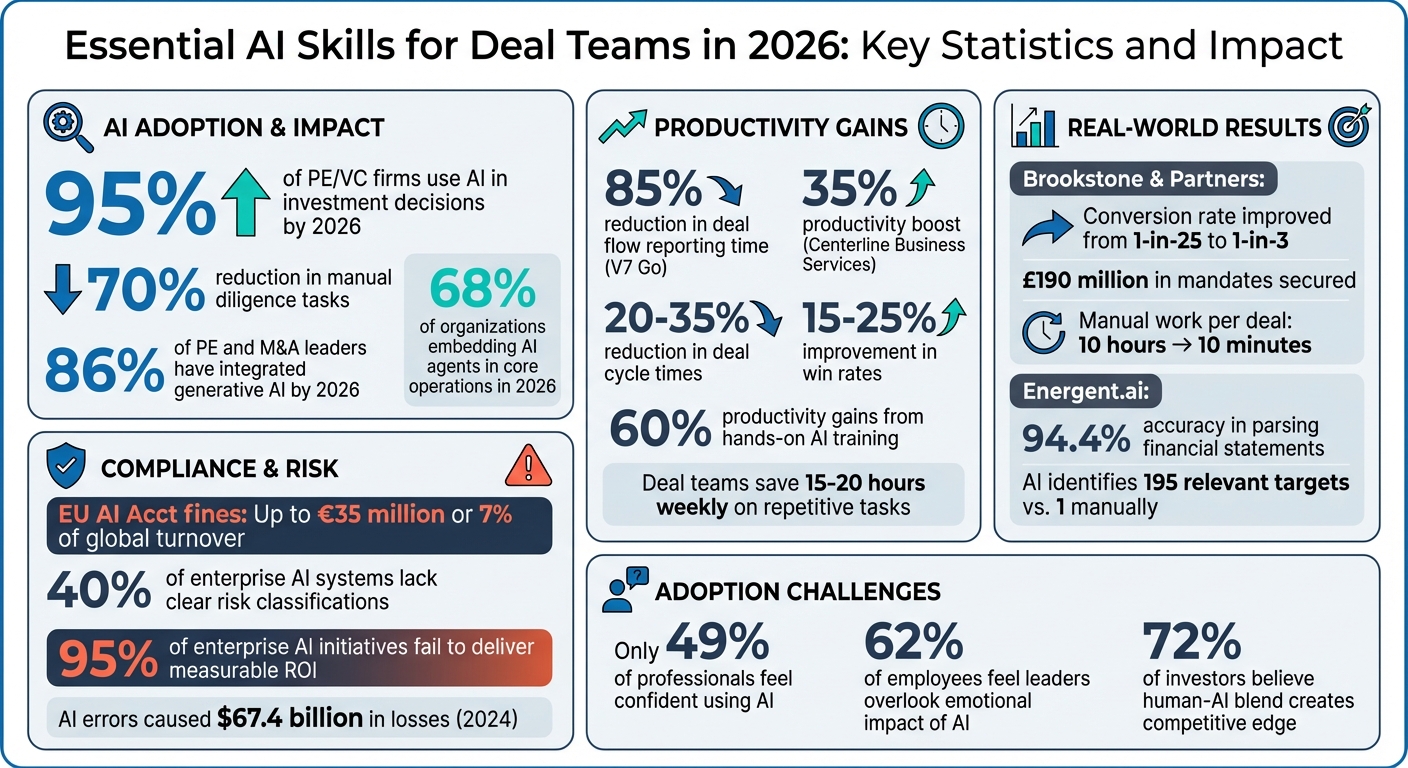

AI is now central to private market deal-making. By 2026, 95% of private equity and venture capital firms use AI in investment decisions, with tools reducing manual diligence tasks by up to 70%. However, success depends on teams mastering AI-driven workflows, validating outputs, and navigating compliance risks. Key skills include:

- Writing precise AI prompts: Use frameworks like "Context + Task + Output" for actionable insights.

- Interpreting AI results: Cross-check findings with traceable sources to ensure accuracy.

- Validating AI outputs: Combine AI with human review for critical decision-making.

- Workflow automation: Leverage no-code tools like V7 Go or Midaxo AI to save time.

- Mitigating AI bias: Detect and address biases to avoid flawed assessments.

- Compliance: Align with GDPR and the EU AI Act to avoid fines up to €35 million.

AI isn't replacing dealmakers but is reshaping how they work. Teams that integrate AI effectively gain a competitive edge, cutting diligence times and improving decision quality. The focus remains on human judgement, but AI enhances speed and precision, allowing professionals to concentrate on high-value activities.

Essential AI Skills for Deal Teams in 2026: Key Statistics and Impact

These AI Skills will give You Immediate Work Advantage

sbb-itb-6ca8558

Core AI Skills for Effective Due Diligence

In 2025, 45% of M&A executives reported regular use of AI tools, more than doubling the previous year’s figure. Furthermore, one-third of the 100 largest transactions that year credited AI-driven due diligence as a key factor in decision-making 6. However, the effectiveness of AI in these processes hinges on how well deal teams can guide, interpret, and validate AI systems across legal, financial, commercial, and sustainability areas.

Writing Effective AI Prompts

Crafting precise prompts is crucial for transforming basic summaries into actionable insights. A helpful framework to follow is "Context + Task + Output." This involves explaining the relevance of the analysis (e.g., aligning with the deal thesis or investment goals), defining the specific task, and outlining the desired format for the output 9. For example, instead of a vague request like "Summarise the contracts", a more targeted prompt could be: "Act as a Senior Private Equity Associate. We are analysing a SaaS business with 15% annual churn assumptions. Extract all customer contract terms related to auto-renewal clauses and organise them in a table listing contract name, renewal terms, and notice periods" 1011.

Breaking down complex tasks into smaller steps - such as Extract → Prioritise → Structure → Draft - can also improve accuracy, particularly when dealing with financial data. Adding instructions like "Think step-by-step" further enhances the precision of outputs 810. Many deal teams now maintain prompt libraries for recurring tasks, which helps ensure consistency and saves time 6.

Understanding AI-Generated Insights

AI-generated insights, especially risk assessments, should be cross-verified with traceable sources like VDR files or SEC filings 137. This traceability allows deal teams to independently confirm findings, which is critical during negotiations or when presenting to boards.

Modern AI tools often use specialised frameworks to identify risks and opportunities aligned with private market investment criteria. For instance, in November 2024, Centerline Business Services adopted the V7 Go platform for automated analysis of financial statements and contracts. This move led to a 35% productivity boost within a month by eliminating repetitive data entry tasks 12.

"The key differentiator with V7 is its ability to understand complex documents with detailed charts and tables. We have seen nothing that compares to the accuracy we get with using V7" – Trey Heath, CEO, Centerline Business Services 12

Despite these advancements, it’s essential to critically evaluate AI-driven insights, a process explored in more depth in the next section on human validation.

Validating AI Outputs with Human Review

AI tools are excellent for screening and prioritising, but final decisions require human expertise 1416. The "CoPilot, not AutoPilot" approach highlights the importance of human oversight to validate strategic context and make informed decisions 1416. For example, in November 2025, a mid-sized private equity firm used an AI tool to review 10,000 pages of legal documentation in under 72 hours. The tool flagged intellectual property transfer issues and vendor indemnities that human reviewers had missed, preventing a £15 million liability 15.

Critical findings - such as accounting adjustments or major integration risks - should undergo independent verification and formal sign-off 617.

"The skill of the expert is as important as ever. I've been impressed that, as a toolset, the latest AI iterations were able to check data for adjustment compliance and even assess counterarguments that could be made" – Robert Tailby, Corporate Disposals Adviser 18

AI Integration and Workflow Optimisation

Getting a handle on AI integration and workflow optimisation is becoming a must for deal teams navigating today’s fast-paced due diligence environment. Think about this: deal teams spend an exhausting 15–20 hours each week on repetitive tasks like data entry and status updates 26. That’s a lot of valuable time lost. But now, no-code AI platforms are stepping in, automating these tedious processes and freeing up analysts to focus on strategic, high-value activities. The transition from reactive tools to proactive, autonomous systems is reshaping how private market firms operate in 2026 24.

No-Code Tools for Workflow Automation

AI-powered tools are transforming how core workflows are managed. Take V7 Go, for example - it slashes deal flow reporting time by a staggering 85%, pulling together data from multiple sources into investor-ready summaries 19. Then there’s Midaxo AI, which comes with "Madi", an AI assistant that handles project updates, target enrichment, and document analysis. And the best part? No training needed 20.

"Madi feels like having an extra team member who never sleeps. We accelerated our deal review instantly." – VP Corporate Development, Global Software Firm 20

Here’s a real-world success story: In 2025–2026, Brookstone & Partners, a capital-raising advisory firm led by Kyle Edwards-Brooks, implemented the Atlas AI Deal Capture System. This system automated tasks like lead qualification, pre-call research, and content visibility, boosting their conversion rate from 1-in-25 to 1-in-3. They secured over £190 million in mandates without adding extra staff. Manual work per deal dropped from 10 hours to just 10 minutes 21.

Another standout platform is Energent.ai, which boasts a 94.4% accuracy rate in parsing financial statements and extracting data. It turns unstructured files like PDFs and spreadsheets into polished deliverables such as slide decks 23. Firms using AI for deal flow management typically report a 20–35% reduction in deal cycle times and a 15–25% improvement in win rates 22.

But here’s the thing: before diving into these tools, you need to prepare. Start by standardising CRM fields and filling in any missing data - AI is only as good as the information you feed it 22. Also, map out your pipeline stages to identify where AI can make the biggest impact, whether it’s in data capture or lead scoring 22.

AI-Driven Collaboration in Multi-Agent Scenarios

AI doesn’t stop at automation; it’s also reshaping collaboration through multi-agent strategies. While no-code tools handle routine tasks, specialised AI agents take on more complex, interconnected duties, making due diligence faster and more efficient. For instance, deal teams now use coordinated agent stacks to tackle different tasks simultaneously. Picture this: a "Day 1" agent indexes a virtual data room (VDR) and prioritises the top 20 documents for review. At the same time, a "Contract Agent" flags potential legal issues, and a "Financial Agent" extracts and normalises KPIs. All this feeds into a comprehensive diligence pack within just 48 hours 1.

This "triangulation strategy" involves deploying multiple agents for specific tasks. One might focus on cleaning up data (like Energent.ai), another on legal or ESG auditing, and a third on running market simulations 23. Human oversight is still crucial, with checkpoints at key stages to ensure quality 1.

AI agents can also act as workstream coordinators, managing due diligence checklists, assigning tasks, tracking progress, and updating risk registers in real time as new documents are added 1. Some firms have even adopted "living memos", where agents automatically update sections of investment committee (IC) memos and flag changes whenever new data enters the VDR 1.

| AI Agent Type | Core Function | Key Output |

|---|---|---|

| Deal Screener | Ingest teasers/CIMs | Go/no-go summary with rationale 27 |

| VDR Indexer | Classify thousands of docs | Priority reading list for analysts 1 |

| Contract Agent | Scan legal documents | Red-flag report on change-of-control 1 |

| IC Prep Agent | Synthesise diligence data | First draft of Investment Committee memo 27 |

It’s worth noting that 72% of investors believe companies that effectively blend human expertise with AI will gain a competitive edge 25. However, 62% of employees feel that leaders often overlook the emotional impact of introducing AI 25. To bridge this gap, assign business owners from the deal team - not just IT leads - to oversee AI tools. This ensures the technology aligns with your investment strategy and team goals 1.

These advancements, like Axion Lab’s AI-powered due diligence solutions, highlight the potential for seamless AI integration to help deal teams focus on what truly matters: making strategic decisions and driving results.

Ethical and Compliant Use of AI in Private Markets

For deal teams, using AI responsibly is just as important as mastering data automation or crafting precise prompts. In the high-pressure world of deal-making, AI must deliver not only efficiency but also accountability. By 2026, the regulatory landscape will demand rigorous adherence to rules, with potential fines reaching up to €35 million or 7% of global turnover for non-compliance 28. Beyond avoiding penalties, it's crucial to ensure AI systems don’t perpetuate biases or obscure critical decision-making factors. Ethical AI use isn't just a regulatory checkbox - it’s a safeguard for fairness and accuracy.

The challenge is steep. A study by appliedAI revealed that 40% of enterprise AI systems lack clear risk classifications 28, while 95% of enterprise AI initiatives fail to deliver measurable ROI, often due to poor governance and readiness 31. For deal teams, this means developing skills to navigate regulations and detect bias - skills that serve both as protection and a competitive edge.

Following GDPR and EU AI Act Requirements

The GDPR and the EU AI Act work hand-in-hand 3233. Under the AI Act, systems are categorised into four risk levels: Prohibited, High-Risk, Limited Risk, and Minimal Risk. High-risk systems - like those used for credit scoring or insurance assessments - must comply with stringent requirements outlined in Articles 9–49. These include risk management, data governance, technical documentation, and human oversight 28. Breaching these rules could result in fines of up to €15 million or 3% of global annual turnover 28.

The clock is ticking, with 2nd August 2026 set as the deadline for full compliance with high-risk AI system requirements 28. Deal teams must act now to unify Data Protection Impact Assessments (DPIA) with Fundamental Rights Impact Assessments (FRIA) for any AI handling personal data 28. A comprehensive risk register documenting all AI tools, including third-party APIs, is essential. This register should classify tools according to the AI Act's risk tiers and include technical logs to support monitoring and traceability 28.

AI should never replace human judgement in critical areas like final decisions, sensitive communications, or conflict resolution 30. Licensing terms must also be scrutinised to ensure firms retain rights to AI outputs and prevent proprietary deal documents from being used to train public models 29. Remember, the EU AI Act has extraterritorial reach - it applies to any organisation whose AI systems impact EU residents, regardless of where the company is based 28.

Detecting and Reducing AI Bias

Regulatory compliance sets the foundation, but fairness demands active bias detection and mitigation. Bias in AI isn’t hypothetical - it’s a tangible risk. For example, AI errors, or "hallucinations", caused losses estimated at $67.4 billion in 2024 36. In another case, SafeRent faced a $2.2 million settlement due to algorithmic bias 36. For deal teams, unchecked bias could distort valuations, misidentify risks, or lead to flawed assessments of target companies.

Bias can creep in at any stage of the AI lifecycle 34. Common sources include imbalanced datasets (representation bias), flawed feature selection (measurement bias), and systemic inequalities (historical bias) 34. To counteract this, a human-in-the-loop (HITL) approach is essential for validating critical outcomes, such as legal interpretations or valuation adjustments 637.

"AI models may learn from biased data, which often causes them to amplify existing mistakes, inaccurate data sets, and prejudices." – C. Craig Lilly, Partner, Reed Smith 35

Bias mitigation strategies can be grouped into three main areas:

- Pre-processing: Adjusting training data through augmentation or reweighting to ensure balance.

- In-processing: Applying fairness constraints during model training.

- Post-processing: Modifying decision thresholds after training to equalise outcomes 3436.

Deal teams should demand model cards and datasheets from AI vendors. These documents provide transparency about model architecture, training data, and limitations 36. Alarmingly, over 40% of AI vendors cannot explain their models’ decision-making processes for high-stakes scenarios 36, making transparency non-negotiable.

Engaging domain experts and affected groups through participatory design can help uncover hidden biases and challenge assumptions 34. Additionally, adversarial testing or "red teaming" can expose vulnerabilities like prompt injection or data poisoning, which could compromise fairness and integrity 3536. As the Information Commissioner's Office (ICO) explains, "Fairness questions are highly contextual. They often require human deliberation and cannot always be addressed by automated means" 34. This reality is why 57% of mature AI adopters now use a "hub-and-spoke" model for centralising AI governance, privacy, and explainability 31.

Platforms like Axion Lab, which align with GDPR and the EU AI Act, show how responsible AI can integrate seamlessly into due diligence workflows without sacrificing speed or insight. By prioritising these ethical and compliance measures, deal teams can not only avoid regulatory pitfalls but also improve the fairness and reliability of their investment decisions.

Continuous AI Skill Development for Deal Teams

The world of AI is advancing at a speed that many deal teams find hard to match. By 2026, 86% of private equity and corporate M&A leaders are expected to have integrated generative AI into their workflows 38. Even more immediate, 68% of organisations anticipate embedding AI agents into their core operations this year 38. For deal teams, this means more than just adopting AI - it requires a commitment to continually adapt as these tools evolve. Static, one-off training simply won’t cut it. Instead, structured, ongoing strategies are essential to keep up with new tools while maintaining the precision and discipline that private markets demand.

Interestingly, it's not just the firms with the largest budgets that are leading the way. Success often comes from building cross-functional AI adoption teams to ensure seamless integration across various workstreams 37. Some firms are running 30–60 day pilot programmes to test specific applications like CIM summarisation or VDR indexing 1. Others are creating shared prompt libraries to maintain consistency across analysts 6. What sets these firms apart is their commitment to making these practices part of their routine, rather than treating them as one-off initiatives. This constant evolution calls for a proactive and disciplined approach to AI training and adoption.

Customising AI Agents for Private Market Tasks

Tailoring AI agents to meet the specific needs of private markets is a vital part of staying ahead. The good news? Deal teams no longer need coding skills to create highly capable AI agents. Tools like Claude and ChatGPT now allow users to define scoring categories, evaluation rubrics, and weight allocations using plain language 39. The secret to success lies in calibration against historical data: testing these models against 20 to 30 past deals - both pursued and passed - ensures that the AI aligns with your firm’s unique investment approach 39.

Customisation also involves leveraging agentic workflows. These involve AI handling multi-step tasks like extracting metrics from a CIM, populating templates, and drafting initial IC memos. Crucially, this process includes human-in-the-loop (HITL) review gates at key stages such as screening, diligence findings, and investment committee reviews. This ensures the outputs are both accurate and traceable, with every claim linked to specific document pages to prevent errors or hallucinations 1. Many firms are adopting a hybrid build-versus-buy strategy, combining off-the-shelf tools for standard workflows - like board packs (costing £40,000–£240,000 annually) - with custom-built agents for proprietary processes, such as unique diligence playbooks (£160,000–£640,000 to build and maintain) 38.

Adapting to New AI Capabilities

Even with streamlined workflows in place, staying on top of new AI developments is critical for maintaining a competitive edge in due diligence. This requires a disciplined approach. Start with quarterly recalibrations of scoring models to reflect changes in market conditions and investment strategies 39. Implement a model choice policy to determine which specific AI models - such as GPT-4 or Claude - are best suited for tasks like diligence or board pack creation 38. This ensures teams don’t default to outdated tools and instead use the most effective solutions for each task.

The shift from simple chatbots to autonomous agents is happening quickly. These advanced systems can now analyse over 50 customer agreements and assess revenue concentration risks without human input 1. With investment committees now dedicating 30–40% of their time to evaluating a target company’s AI capabilities and data maturity 6, AI readiness has become a critical part of due diligence. To keep up, deal teams should regularly audit citation validity rates - checking how often AI correctly links insights to specific document pages - to ensure model reliability over time 1.

Platforms like Axion Lab are leading the charge with AI-powered due diligence tools. These solutions provide traceable, auditable evidence across legal, commercial, financial, and operational areas. They show how AI can integrate smoothly into daily workflows without compromising speed or governance, offering a glimpse of what’s possible when technology and discipline meet.

Conclusion: Building AI-Ready Deal Teams in 2026

This checklist highlights the essential AI skills every deal team member needs. By 2026, AI will be at the heart of deal-making processes. Success won’t necessarily hinge on having the biggest budget - it will come down to equipping teams with the right tools and training. The difference between leading the pack or falling behind lies in whether your team can leverage AI for tasks like VDR indexing or continues to rely on outdated manual processes.

Currently, only 49% of professionals feel confident using AI, even as 86% of companies integrate these tools by 2026 40. This gap presents both a challenge and an opportunity. Teams that prioritise continuous, hands-on training - focused on practical applications - are already seeing productivity gains of nearly 60% 2. On the other hand, firms sticking to manual methods are losing ground to AI-driven competitors, who can identify 195 relevant targets in the time it takes a junior analyst to find just one 4. These numbers emphasise the urgency of building solid AI expertise while underscoring the ongoing importance of human judgement 5.

As David Petrie, Head of Corporate Finance at ICAEW, aptly states:

"The negotiation and relationship‑building side of deal‑making remains profoundly human" 5

AI isn’t here to replace human judgement - it’s here to enhance it. The most effective teams use AI to handle data-heavy analysis, which typically consumes 60% to 70% of their time. This allows professionals to focus on what truly matters: building conviction, standing out in the market, and fostering relationships 3. But this shift requires a new mindset - one that sees AI not as a threat, but as a tireless junior team member delivering faster, more thorough insights than manual processes ever could.

Take Axion Lab’s tools as an example. These purpose-built AI solutions speed up due diligence by rapidly indexing documents and identifying red flags. They help deal teams maintain efficiency without sacrificing governance, offering traceable and auditable evidence across legal, commercial, financial, and operational areas 1. By processing thousands of documents in hours and flagging risks aligned with firm-specific legal playbooks, tools like these underscore the potential of AI to transform deal-making 1.

Leading firms are already running 30–60 day pilots, creating shared prompt libraries, and embedding human oversight at critical decision points 16. With one-third of the 100 largest transactions in 2025 citing AI-enabled due diligence as a decisive factor 6, the question isn’t whether your teams should embrace AI - it’s how quickly you can make them ready for the future.