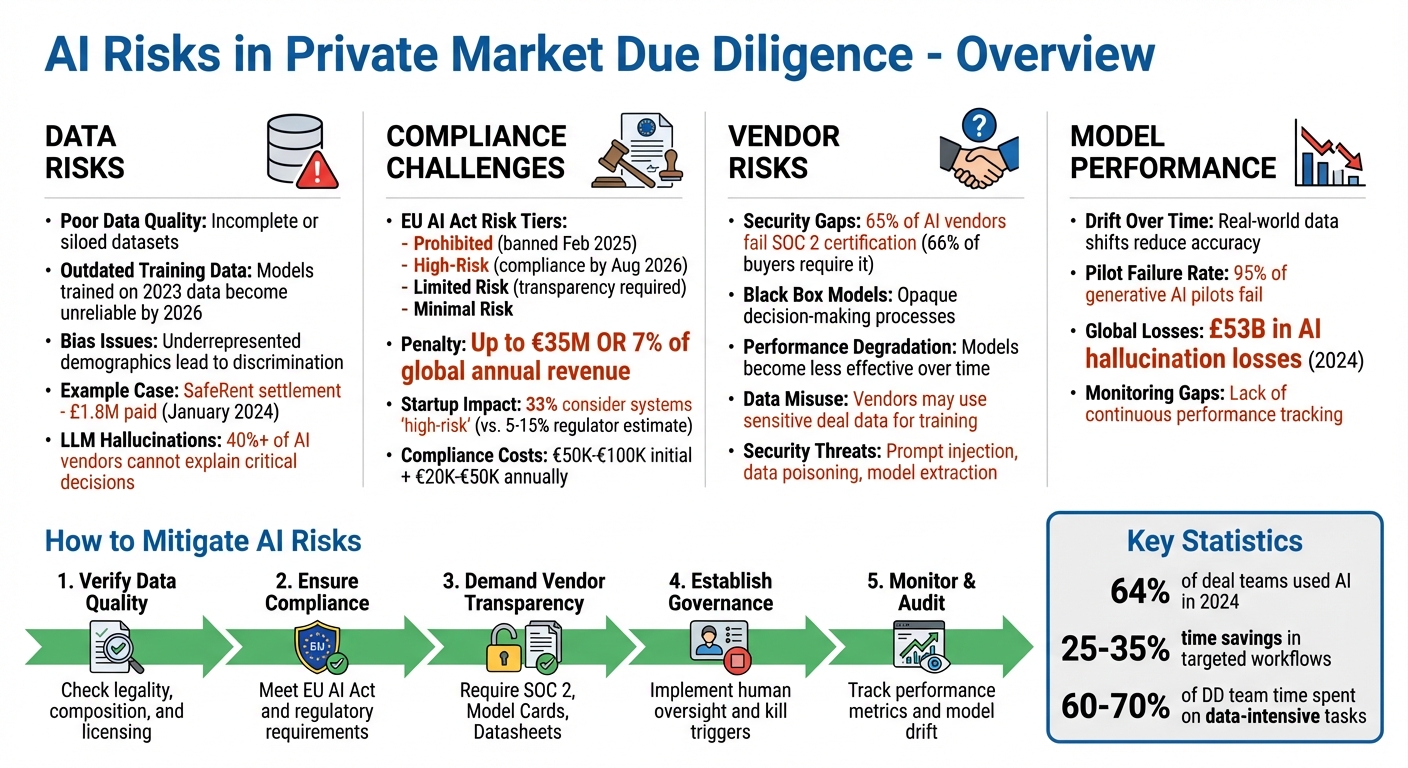

Artificial intelligence (AI) is transforming private market due diligence by speeding up processes like document reviews, financial analysis, and risk assessments. But, adopting AI comes with risks that can lead to costly mistakes if not managed properly. These include poor data quality, model bias, regulatory non-compliance, and vendor issues. Here's what you need to know:

- Data Risks: Inaccurate or outdated training data can lead to unreliable outputs. Bias in datasets can also result in discriminatory decisions, exposing firms to legal and reputational risks.

- Compliance Challenges: Regulations like the EU AI Act require transparency, documented audit trails, and strict adherence to data laws. Non-compliance can lead to fines of up to €35 million or 7% of global annual revenue.

- Vendor Risks: Many AI vendors lack transparency, fail to meet security standards, and may use sensitive deal data improperly. Contracts must include clauses to safeguard data and ensure accountability.

- Model Performance: AI models can degrade over time, leading to errors or missed risks if not monitored and updated regularly.

To manage these risks, firms should:

- Verify data quality and legality.

- Ensure AI tools comply with regulations.

- Demand transparency and security from vendors.

- Set up governance frameworks and human oversight.

- Regularly monitor and audit AI performance.

Key AI Risks in Private Market Due Diligence and Mitigation Strategies

Key AI Risks in Private Market Due Diligence

Data Quality and Bias Issues

The effectiveness of AI hinges on the quality of the data it processes. Poor data quality - whether due to incomplete datasets or siloed information - remains a major challenge for firms adopting AI. In fact, many companies find themselves addressing these problems only after a deal is finalised, leading to costly remediation efforts 4. Another concern is the static nature of training data. For example, models trained on 2023 data may become less accurate by 2026 as market conditions evolve, resulting in unreliable outputs 62.

Bias in historical datasets is another minefield. When training data underrepresents certain demographics, it can lead to discriminatory outcomes, exposing firms to legal and reputational risks. A stark example is the January 2024 SafeRent settlement, where the company paid approximately £1.8 million after being accused of algorithmic bias in its tenant screening software 6.

Large language models (LLMs) bring their own set of challenges, particularly hallucinations - instances where models generate convincing but incorrect information. This can undermine the reliability of investment theses. Alarmingly, more than 40% of AI vendors are unable to provide clear explanations for critical decisions, such as identifying fraudulent transactions or rejecting credit applications 6. Without traceable outputs tied to specific sources, firms risk basing decisions on fabricated or unverifiable data.

Data provenance further complicates things. Many AI models are trained on web-scraped or unlicensed third-party data, creating potential liabilities under GDPR and the EU AI Act 62. To mitigate these risks, firms should verify the legality of training data and request detailed documentation, such as "Datasheets for Datasets", which outline data composition, collection methods, and licensing rights 6.

Beyond data concerns, navigating the regulatory landscape adds another layer of complexity to AI due diligence.

Compliance and Regulatory Challenges

AI regulations are becoming increasingly stringent. The EU AI Act, for instance, categorises AI systems into four risk tiers: Prohibited (banned as of February 2025), High-Risk (requiring compliance by August 2026), Limited Risk (subject to transparency obligations), and Minimal Risk 67. Non-compliance can result in penalties of up to €35 million or 7% of global annual revenue 67.

Interestingly, a recent survey found that 33% of AI startups consider their systems "high-risk" under the EU AI Act - far exceeding the initial regulator estimates of 5% to 15% 8. Preparing for compliance is no small task, with high-risk startups budgeting between €50,000 and €100,000 for initial compliance efforts and allocating an additional €20,000 to €50,000 annually for ongoing requirements 8. These costs directly influence how private equity firms approach AI adoption in due diligence processes. For compliance under Article 26, firms must ensure vendors meet transparency requirements, maintain technical documentation, and establish protocols for human oversight 6.

"Organisations deploying high-risk AI without vendor transparency face regulatory penalties up to €35M under the EU AI Act and class‐action exposure."

– Joe Braidwood, CEO, GLACIS 6

Regulatory scrutiny is also intensifying around "AI washing" - where companies exaggerate their AI's capabilities. For instance, in 2024, the Texas Attorney General investigated Pieces Technologies over claims that its AI had an implausibly low "hallucination rate of <0.001%" 6.

While compliance is a major hurdle, the risks associated with third-party vendors add yet another layer of complexity.

Third-Party Vendor Risks

Relying on external AI vendors introduces risks that traditional IT due diligence often overlooks. For instance, over 66% of B2B buyers now require SOC 2 certification before signing contracts with SaaS providers. Yet, 65% of AI vendors fail to meet this standard 6. Even certified vendors often struggle with transparency, particularly around "black box" models, where the decision-making process is opaque 6.

Another issue is the degradation of AI performance over time. As real-world data shifts, models may become less effective 6. Additionally, vendors might use sensitive deal data to refine their models unless firms negotiate "zero data retention" clauses, potentially breaching confidentiality agreements and NDAs 36.

Security is another concern. AI systems are vulnerable to threats like prompt injection, data poisoning, and model extraction - risks that traditional penetration tests often fail to detect 6. Vendor lock-in further complicates matters, as transferring fine-tuned models and capabilities between platforms is rarely straightforward 6. To address these challenges, firms should insist on AI-specific service-level agreements that cover latency, accuracy thresholds, and advance notice (at least 90 days) before deprecating model versions 6.

"Traditional IT vendor due diligence is insufficient for AI systems. Even certified vendors often lack transparency into model behaviour, training data provenance, or bias mitigation."

– Joe Braidwood, CEO, GLACIS 6

sbb-itb-6ca8558

How to Evaluate AI Tools for Risk Management

Checking Security and Compliance Standards

When it comes to evaluating AI tools for due diligence, security certifications are a cornerstone of effective risk management. At a minimum, organisations should look for SOC 2 Type II certification. It's essential to confirm that the report is less than 12 months old and explicitly addresses AI systems, not just general corporate IT infrastructure 6.

In addition to SOC 2, certifications such as ISO 27001 (for information security management) and ISO 42001 (specific to AI management systems) are crucial 6. Standard penetration tests fall short when it comes to AI-specific threats like prompt injection, data poisoning, and model extraction, so these additional certifications help fill the gap 6.

"Traditional IT vendor due diligence is insufficient for AI systems... Due diligence is no longer optional - it's a legal requirement."

– Joe Braidwood, CEO, GLACIS 6

Another critical area is data provenance. Firms must verify the legal basis for the training data used in the AI tool, ensuring that proper licences and consents are in place. Additionally, checking data refresh rates is vital to ensure models remain up-to-date and do not rely on unlicensed or scraped content 211. For example, Axion Lab provides a platform with traceable and auditable insights, enterprise-grade security features like end-to-end encryption, GDPR compliance, and zero data retention. This ensures that every AI-generated claim can stand up to scrutiny in investment committees.

Once security and compliance are confirmed, it's time to focus on the model's transparency and reliability.

Assessing Model Transparency and Reliability

Transparency is just as important as security, especially given ongoing concerns about vendors providing vague or incomplete explanations. To address this, organisations should request Model Cards, which detail the model's architecture and performance, as well as Datasheets that outline data composition and labelling practices 6. This is particularly important, as nearly 40% of AI vendors are unable to explain how their models make critical decisions 6.

Reliability is another key factor. Ensure models have been rigorously tested using holdout data and evaluated against specific performance metrics - not left as untested prototypes 9. AI tools should also provide evidence-linked outputs, such as citations to specific document sections or excerpts, so that every claim or metric can be traced back to its source 10. These steps directly address concerns about data integrity and vendor transparency.

For high-stakes decisions, incorporating human-in-the-loop (HITL) controls is essential. This involves setting up checkpoints where experts review and approve AI-generated findings 410. Additionally, monitor for performance drift by implementing periodic refresh cycles, as AI models can degrade over time due to changes in real-world data 9. It's worth noting that 95% of generative AI pilots fail because theoretical expectations often don’t align with practical results 4. This underscores the importance of thorough evaluation before deploying any AI tool.

Setting Up Governance Frameworks for AI Use

Creating AI Oversight Policies

Bringing together legal, technical, and commercial teams is crucial to managing AI risks effectively. By treating these risks as strategic issues rather than isolated technical challenges, organisations can address them comprehensively at all levels 12. A robust framework should focus on four key areas: recognising known risks, implementing internal safeguards, learning from past mistakes, and maintaining a clear communication plan for high-pressure scenarios 5.

Transparency should be a non-negotiable requirement. Vendors must provide tools like Model Cards and Datasheets, which outline key details such as architecture, performance metrics, limitations, and data origins 6. This is particularly important as a significant gap exists - 40% of AI vendors are unable to explain how their models arrive at critical decisions 6.

For high-risk applications, human oversight is indispensable. Implement human-in-the-loop controls, as outlined in Article 14 of the EU AI Act, which comes into effect in August 2026. Additionally, define "kill triggers" - specific conditions that require immediate suspension of the system. For example, if the model's accuracy falls below an agreed threshold or bias is detected, the system should be halted and reviewed 65.

"A GP does not lose capital by talking about risk. They win capital by taking ownership of it." – Altss 5

AI tools should also be classified based on their risk levels - high, medium, or low. High-risk systems, such as those impacting legal rights or major investment decisions, require full governance protocols. On the other hand, low-risk automation may only need standard IT controls 6.

Once internal governance is set up, these standards should extend to managing relationships with AI vendors.

Managing AI Vendor Relationships

Internal governance protocols should serve as the foundation for managing vendor relationships. Vendor contracts must incorporate rigorous standards covering areas like security, model transparency, data practices, bias prevention, compliance, business continuity, and governance 6. Interestingly, while over 66% of B2B buyers now require SOC 2 certification, many certified vendors still fail to provide adequate transparency into their model behaviour 6.

Contracts should include specific AI-related terms. For instance, version pinning can prevent untested automatic updates from disrupting operations 6. Other essential clauses include a 90-day notice for changes to APIs or model endpoints, performance guarantees with accuracy benchmarks, strict data retention policies, and liability provisions for gross negligence, data breaches, or regulatory violations 6. The stakes are high, with penalties under the EU AI Act reaching up to €35 million or 7% of global annual revenue 6.

To protect proprietary data, prohibit vendors from using your due diligence information to train or improve their models without explicit consent 6. Demand zero data retention options to ensure sensitive information isn’t reused.

Regular monitoring is vital. Set up quarterly business reviews (QBRs) to evaluate model performance against service-level agreements (SLAs), check bias metrics, and review the vendor’s plans for meeting new regulatory requirements 6. Retain audit rights to verify compliance with security measures, bias testing, and data handling practices. California’s Automated Decision-Making Technology regulations, effective January 2026, already mandate pre-use notices and opt-out mechanisms for AI in employment and credit decisions 6. Keeping vendor relationships aligned with these evolving standards is critical.

"Compliance documentation isn't proof. Evidence is." – Joe Braidwood, CEO, GLACIS 6

Episode 32 - Make AI Vendor Risk Real: Due Diligence, Contracts, and Ongoing Oversight (Domain 2)

Preparing Your Organisation for AI Adoption

Once you've established strong vendor governance, the next step is preparing your organisation for AI adoption. This involves equipping your teams with the right skills and carefully evaluating the return on investment (ROI).

Training Teams and Managing Change

Start by clearly defining roles: let AI handle data-heavy tasks, while humans focus on strategy and ethical oversight. AI is well-suited for tasks like document review, financial analysis, and pattern recognition, freeing up human teams to concentrate on strategic planning, ethical considerations, and nuanced decision-making 133. This division not only streamlines workflows but also helps teams view AI as a tool to ease their workload, especially since repetitive tasks can take up 60–70% of their time 3.

Adopt a phased approach to AI integration to avoid overwhelming your teams. Begin with a Discovery Sprint to map existing workflows and pinpoint areas where automation could make the biggest impact. Test AI tools on a small scale - such as two or three completed deals - comparing their outputs to known results to ensure accuracy before moving to live operations 3. During initial live transactions, run AI alongside traditional methods, allowing teams to validate its performance in real time and fine-tune workflows accordingly 3.

"AI won't replace experienced deal teams, but firms that learn to use AI and integrate AI into each diligence stream will move faster, identify risks earlier, and build more defensible investment theses." – Private Equity List 1

To make this transition smoother, invest in training programmes that teach practical skills like prompt engineering, data interpretation, and critically evaluating AI-generated insights 13. Upgrade your internal data systems to ensure financial and operational data is clean, well-structured, and easily accessible 1. Create formal feedback loops where team members can cross-check AI recommendations with source materials, helping to continuously improve the system's accuracy 13.

Once your teams are ready, the focus shifts to evaluating the benefits of AI and planning for broader implementation.

Measuring ROI and Scaling AI Use

To justify scaling AI, track metrics that show its value. For example, AI can drastically cut down the time needed for document reviews 1. Other key indicators include higher success rates in competitive auctions and fewer unexpected issues after closing deals - both signs that AI is effectively identifying risks earlier 1.

Financial due diligence is a particularly measurable area for assessing ROI. Compare the time saved using AI against traditional manual processes 3. In 2024, 64% of deal teams reported using AI in at least one diligence workstream, reflecting growing confidence in its benefits 1. These metrics not only showcase efficiency gains but also guide decisions on scaling and governance.

When scaling AI usage, set achievable timelines. Start with a pilot phase lasting four to six months, focusing on select workflows. Gradually expand to full deployment over 12 to 18 months, ensuring the system performs well even with complex data sets 14. Early adopters have reported time savings of 25–35% in targeted workflows, demonstrating significant efficiency improvements 14.

Monitoring and Auditing AI Performance

Once your team has been trained and AI tools are integrated into your processes, the next step is ensuring these systems remain effective and reliable over time. AI models don’t stay static – their performance can decline as market conditions evolve, data patterns shift, or new edge cases arise. Without regular monitoring, decisions risk being based on outdated or skewed insights. This makes ongoing oversight a critical part of maintaining dependable workflows.

Setting Up Continuous Risk Monitoring

Start by defining performance benchmarks for your AI tools. Keep an eye on operational metrics like API uptime (aiming for 99.9% or higher), latency at the 95th and 99th percentiles, and request success rates. Pair these with model-specific metrics such as precision, recall, F1 scores, and confidence score distributions. Importantly, these should be assessed using live production data, not just controlled test sets 61129.

Watch for signs of model drift – where real-world data begins to deviate from the conditions under which the model was trained. For instance, a fraud detection model built pre-pandemic might struggle to identify new attack patterns that emerged during or after that period. The financial stakes are high: in 2024, global losses tied to AI hallucinations were estimated at around £53 billion 6.

Beyond accuracy, fairness and bias must also be monitored. Metrics like demographic parity and equalised odds can help detect discriminatory outcomes. This is particularly vital given that over 40% of AI vendors reportedly struggle to explain decisions in high-stakes scenarios 6. To maintain control, consider measures like version pinning to prevent unintended updates to models. Regular reviews with vendors can also ensure the tools meet agreed service levels 6.

| Monitoring Category | Metrics | Purpose |

|---|---|---|

| Operational | P99 Latency, Error Rates, Uptime | Ensures reliable production performance 6. |

| Model Quality | Precision, Recall, F1 Score, Confidence Scores | Confirms the accuracy of AI-driven outputs 6. |

| Fairness | Demographic Parity, Equalised Odds | Identifies potential bias or unfair outcomes 6. |

| Financial | Inference Cost per Workflow, Gross Margin | Evaluates the economic efficiency of the AI system 11. |

| Security | Prompt Injection Resistance, Penetration Test Results | Protects against emerging AI-specific threats 6. |

Using Domain-Specific AI Frameworks

While general performance metrics provide a useful snapshot, certain industries require more detailed insights, especially for high-stakes decisions. Standard AI tools often lack the depth needed for rigorous evaluations. Domain-specific frameworks, like those from Axion Lab, offer tailored analyses in areas such as legal, financial, operational, and digital due diligence 15. These frameworks go beyond surface-level summaries, linking findings directly to source documents and making results fully auditable for decision-makers.

"Many DD reports arrive at the end of the late stages of price negotiations. It becomes a checkbox, not a decision input, creating blind spots and leaving value on the table."

– Sergei Maslennikov, Co-founder, Axion Lab 15

This traceability will become even more crucial when the EU AI Act takes effect in August 2026. Non-compliance with its high-risk AI system requirements could result in penalties of up to €35 million or 7% of global revenue 6. Domain-specific frameworks can help organisations meet these stringent requirements by providing cryptographic evidence and automated "evidence packs" that verify controls for specific model versions 6. As regulators increase their scrutiny of algorithmic decision-making, this shift from vendor self-certification to evidence-backed monitoring is emerging as a new standard. By adopting continuous audits and leveraging specialised frameworks, organisations can ensure their due diligence processes remain robust and trustworthy.

Conclusion: Best Practices for Managing AI Risks

Effectively managing AI risks goes beyond just technical fixes - it requires a broader business strategy. Companies that succeed in this area approach AI as a strategic priority, not just a technical tool. This involves carefully evaluating AI tools, setting up clear governance structures, preparing teams for AI integration, and continuously monitoring how models perform. Such a strategy is essential to address the high financial and operational stakes in today’s AI-focused markets.

With a growing share of mergers and acquisitions (M&A) activity being driven by AI - and many of these deals failing to meet expectations - the importance of thorough due diligence cannot be overstated. Poor technical assessments can lead to costly mistakes, often tied to issues like weak data origins, unsustainable models, or risks related to retaining skilled talent 29. To mitigate these challenges, adopting a four-dimension framework is crucial. This framework evaluates:

- Data assets: Ensuring data quality and reliability.

- Model quality: Assessing the robustness and scalability of AI models.

- AI talent depth: Gauging the strength and stability of the AI team.

- Regulatory compliance: Ensuring adherence to relevant laws and standards 29.

"AI due diligence does not replace human judgment. It amplifies it by handling the data-intensive analysis that consumes 60 to 70 per cent of a DD team's time." – Dr. Leigh Coney, Founder, WorkWise Solutions 3

This balance - leveraging AI for efficiency while preserving human judgment - forms the backbone of a robust risk management strategy.

Specialised tools can play a vital role in implementing this framework. For example, platforms like Axion Lab offer domain-specific solutions that provide traceable and auditable insights early in the deal process. This ensures due diligence is thorough and not reduced to a mere formality 15.

As regulations evolve, particularly with the EU AI Act set to take effect in August 2026, companies with strong governance and evidence-based monitoring in place will be well-prepared. These firms will not only comply with new legal requirements but also position themselves to harness AI’s potential more effectively.