Generative AI is transforming private market deals, speeding up processes like due diligence, contract drafting, and financial analysis. However, it introduces serious risks that firms must address to avoid costly mistakes. Key concerns include:

- Data security: Sensitive information can be exposed through public AI tools or prompt injection attacks. Legal privilege may also be lost.

- Accuracy issues: AI outputs are prone to errors or "hallucinations", especially in complex legal and financial contexts, leading to mispricing or sanctions.

- Regulatory challenges: AI systems often lack transparency, making compliance with GDPR and similar regulations difficult.

To mitigate these risks, firms should use secure AI tools, implement human oversight, and ensure vendor accountability. A structured approach, including strict policies and regular audits, is essential for balancing AI's benefits with its potential pitfalls.

Main Risks of Generative AI in Private Market Transactions

Private market firms face three main risk areas when using generative AI: data security issues, accuracy problems, and regulatory challenges. Each carries unique threats to deal integrity and firm accountability.

Data Security and Privacy Concerns

Using AI tools to handle confidential deal information can jeopardise legal professional privilege under English law. Recent rulings highlight this risk. For instance, in early 2026, the US case US v Heppner determined that communications shared with a public AI tool (Claude) lost attorney-client privilege because confidentiality was compromised. Similarly, the UK Upper Tribunal suggested that uploading confidential material to public AI platforms might be akin to making it publicly accessible 3.

Many public AI platforms have terms allowing providers to store, analyse, or reuse user inputs for model training. This creates a real risk of unintentionally exposing sensitive deal data or proprietary insights 31. There’s also the threat of prompt injection attacks, where malicious prompts masquerade as legitimate ones to extract sensitive information. Cross-border data processing by AI can further lead to breaches of non-disclosure agreements 1.

Internal risks are equally concerning. These include accidental uploads to non-proprietary or open-source AI models and the use of unsecured AI-powered tools like notetakers, both of which can compromise confidential data 31.

"Publicly available AI tools should be treated as external recipients. If general disclosure would concern you in that context, it should concern you here." – DWF Group 3

While data security is a major concern, ensuring the accuracy of AI outputs is another significant hurdle.

Accuracy and AI Hallucination Problems

Beyond data security, accuracy is a pressing issue. While vendors claim hallucination rates of less than 1% for basic summaries 4, these rates can skyrocket to between 69% and 88% when dealing with complex legal or financial tasks 4. This gap between vendor claims and real-world performance poses serious risks to transaction accuracy. Legal and financial professionals remain vulnerable to malpractice claims due to fabricated citations or incorrect data.

Between 2023 and early 2026, there were over 729 documented cases of AI hallucinations in court filings 4. In one instance, a sanction exceeding £100,000 was imposed for errors caused by AI by late 2025 4.

"AI hallucinations won't disappear. They're baked into how large language models work." – Artificial Lawyer 4

These hallucinations can disrupt early deal processes, appearing in Confidential Information Memorandums, management presentations, or AI-generated financial models 4. Errors in litigation risk assessments or regulatory findings can lead buyers to undervalue or overvalue contingent liabilities by millions, or even waive critical closing conditions 4. The time spent proofreading and verifying AI outputs often cancels out any supposed efficiency gains, leaving professionals to question the utility of such tools 5.

While technical inaccuracies can lead to financial or legal problems, regulatory compliance adds another layer of complexity.

Regulatory and Compliance Challenges

Under UK GDPR, firms are required to notify users and obtain consent when AI-driven actions significantly affect individuals 67. Regulators stress that "AI agency does not mean the removal of human, and therefore organisational, responsibility for data processing" 67.

Generative AI often struggles with explainability, making it challenging to show how decisions were reached. This lack of transparency can lead to "purpose creep", where AI systems access more data than necessary for a particular task 1. Additionally, AI agents may inadvertently process or infer sensitive information, potentially triggering GDPR Article 9 conditions.

In ecosystems involving multiple vendors, defining roles like "controller" and "processor" becomes complicated. This ambiguity can increase legal liability in cases of data breaches. Moreover, AI tools may use protected data without proper consent or process it in ways that breach non-disclosure agreements or client engagement terms 1.

Taken together, regulatory challenges amplify both security and accuracy risks, threatening the overall integrity of private market transactions.

sbb-itb-6ca8558

How Generative AI Risks Affect Private Market Deals

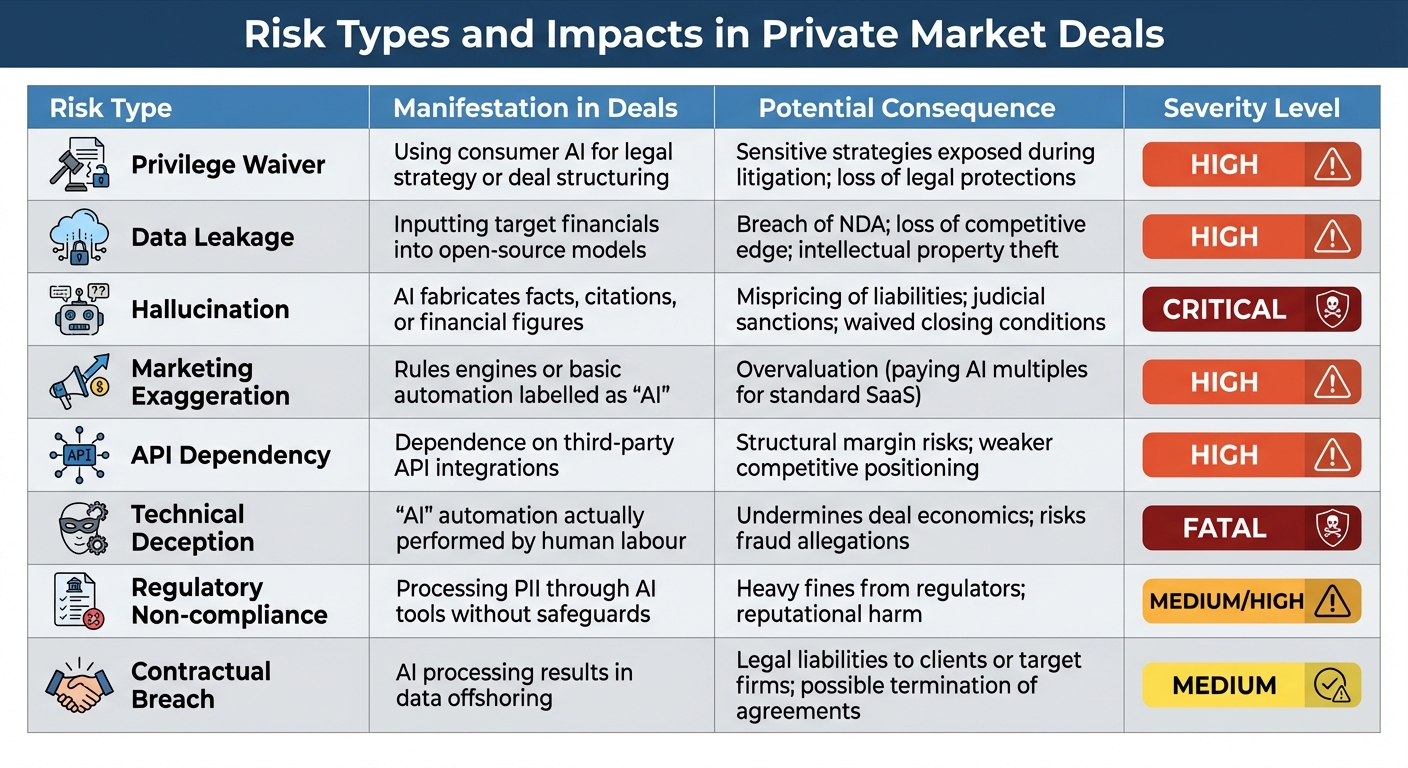

Generative AI Risk Types and Impacts in Private Market Deals

The interplay between data security, accuracy, and regulatory compliance creates a web of challenges that can disrupt private market deals. These risks often intersect during deal execution, potentially leading to disputes or even derailing transactions entirely. Below is a closer look at how these risks influence deal outcomes.

Data security breaches are becoming a growing concern, especially during litigation. AI prompts, chat logs, and outputs are increasingly being treated as discoverable evidence in legal disputes like earnout disagreements or breach-of-contract claims 2. For instance, in February 2026, the US court ruling in United States v. Heppner highlighted how AI-generated documents could lose legal privilege, complicating deal disputes 2. In M&A cases, opposing counsel now actively seek "AI-Assisted Deal Materials", such as conversation logs and AI-generated analyses, to find evidence that could weaken the other party’s position 2.

Accuracy failures can have a direct and costly impact on deal valuations. Errors like hallucinations in financial models or inaccurate market sizing can misprice assets or liabilities by millions of pounds. Additionally, "AI washing" - where companies overstate their AI capabilities - can lead to inflated valuations. For example, targets may be valued at AI multiples (10–30× revenue) instead of standard SaaS multiples (3–8×). When these claims are debunked, enterprise values may drop by 40–70% 48. The cost to fix these issues can vary widely: addressing a "capability gap" (where AI is less advanced than advertised) can cost between £400,000 and £1.5 million over 12–24 months, while cases of "fundamental technical deception" (where manual processes are disguised as AI-powered) can result in remediation costs exceeding £8 million 8.

Regulatory non-compliance adds another layer of risk, leading to both immediate and long-term liabilities. For example, inputting sensitive deal data into public AI tools could result in data "offshoring", potentially violating non-disclosure agreements or client contracts 1. Regulatory bodies are also stepping up enforcement. In February 2025, the SEC created a 30-person enforcement unit focused on AI-related fraud, and authorities are increasingly pursuing criminal cases for false claims about AI capabilities in investment contexts 8.

Risk Types and Impacts: Comparison Table

| Risk Type | Manifestation in Deals | Potential Consequence | Severity Level |

|---|---|---|---|

| Privilege Waiver | Using consumer AI for legal strategy or deal structuring | Sensitive strategies exposed during litigation; loss of legal protections | High |

| Data Leakage | Inputting target financials into open-source models | Breach of NDA; loss of competitive edge; intellectual property theft | High |

| Hallucination | AI fabricates facts, citations, or financial figures | Mispricing of liabilities; judicial sanctions; waived closing conditions | Critical |

| Marketing Exaggeration | Rules engines or basic automation labelled as "AI" | Overvaluation (paying AI multiples for standard SaaS) | High |

| API Dependency | Dependence on third-party API integrations | Structural margin risks; weaker competitive positioning | High |

| Technical Deception | "AI" automation actually performed by human labour | Undermines deal economics; risks fraud allegations | Fatal |

| Regulatory Non-compliance | Processing PII through AI tools without safeguards | Heavy fines from regulators; reputational harm | Medium/High |

| Contractual Breach | AI processing results in data offshoring | Legal liabilities to clients or target firms; possible termination of agreements | Medium |

These risks highlight the complexity of integrating generative AI into private market deals. Missteps in any of these areas can have serious financial, legal, and reputational consequences.

How to Reduce Generative AI Risks

Reducing the risks associated with generative AI requires a structured approach that emphasises data security, human involvement, and holding vendors accountable. These steps not only protect sensitive information but also help maintain the integrity of processes like deal structuring. For private market firms, the key is to view AI as an assistant, not a decision-maker, and to create safeguards that minimise potential failures.

Using Secure AI Tools

The first step in reducing risk is selecting AI tools that meet stringent security standards. Look for tools that provide end-to-end encryption, SOC Type I certification, compliance with GDPR and the EU AI Act, and enforce strict "Zero Data Retention" and "No Model Training" policies.

Before uploading any documents to an AI system, ensure sensitive information is redacted. Automated pipelines can help by removing or pseudonymising personal details like National Insurance numbers, IBANs, and names. For additional security, consider deploying AI within a Virtual Private Cloud. This setup, combined with egress controls and time-limited upload tokens, strengthens data protection. Domain-specific platforms like Axion Lab (https://axionlab.ai) are built with these principles in mind, offering tools for due diligence that include traceable evidence and compliance with current regulations.

Once secure tools are in place, the next critical step is to ensure robust human oversight.

Setting Up Human Oversight and Validation

Human oversight acts as a vital safety net for AI-driven processes. Introduce checkpoints at key stages - such as initial screening, diligence findings, and Investment Committee reviews - where humans validate the AI's output. This approach divides responsibilities: AI focuses on repetitive tasks like extracting data and summarising KPIs, while humans maintain control over critical decisions like investment strategies and valuations 12.

A particularly useful framework here is Retrieval-Augmented Generation (RAG). This ensures that every AI-generated claim is tied to a specific, auditable source document, down to the page and line number. If no verifiable source is found, the AI should respond accordingly, reducing the risk of incorrect or misleading outputs. As Lord Clement-Jones, co-founder of the All-Party Parliamentary Group on AI, observed:

"What's happening is that the opportunities for training and being on the spot with your senior people are being restricted. As AI crunches the data, the associate doesn't quite get that leg up while they are learning" 10.

Vendor Audits and Custom AI Models

In addition to secure tools and human oversight, vendor audits are essential to managing AI risks. Conduct thorough security audits of AI vendors and ensure service contracts include clauses for data deletion certification, audit rights for SOC2/ISO27001 reports, and guarantees against using your data for model training. Vendors should also provide clear audit trails and source attribution for all outputs. Cheryl Kosche, SVP at Lockton, highlights the potential risks:

"AI remains a relatively unchartered legal and regulatory territory, and your firm needs to be prepared in case of an unforeseen lawsuit or regulatory action" 9.

To further reduce risks, consider using domain-specific AI models tailored to private market needs. These models minimise reliance on opaque "black box" decision-making and offer more detailed insights for tasks like due diligence. Implement least-privilege access controls using role-based access (RBAC) with session-based permissions for file uploads, instead of permanent admin tokens. Regularly monitor for model drift to ensure the AI remains accurate and reliable when working with live data, not just curated benchmarks 11.

Building Low-Risk AI Frameworks for Private Markets

Integrating AI into private markets requires a thoughtful approach that ensures both efficiency and security. The objective is to incorporate AI into deal processes while upholding the rigorous compliance and data protection standards expected in institutional investment. This involves establishing a governance structure from the start, classifying data based on sensitivity, and ensuring every AI deployment is fully auditable. Here's how this framework can be put into practice for deal structuring.

Steps for Adopting AI in Deal Structuring

These steps are designed to address risks like data breaches, inaccuracies, and compliance issues.

Start by creating a cross-functional AI Oversight Committee with representatives from investment, operations, compliance, and legal teams. This committee should draft a formal AI Policy Document that defines approved tools, acceptable use cases, and accountability. Assign specific roles such as:

- Data Owners: Deal partners responsible for approving the use of sensitive files.

- Data Stewards: Operations leads who enforce data classification and retention policies.

- Model Owners: Technical experts managing access and maintaining system logs.

- Compliance Officers: Specialists ensuring adherence to regulations like the SEC, EU AI Act, and GDPR 13.

Next, establish a tiered data classification framework. Categorise data into tiers based on sensitivity: Tier 1 for deal material non-public information (MNPI), Tier 2 for portfolio data, Tier 3 for limited partner (LP) data, and Tier 4 for market intelligence. This allows security measures to be tailored to the level of risk. It's worth noting that in 2025, 34% of private equity examination letters from regulators included questions about AI governance and data handling - up from just 8% in 2023 14.

Begin by piloting AI systems with Tier 4 data to test controls before handling more sensitive information. A typical implementation timeline spans 11–17 weeks, divided as follows:

- 2–3 weeks for drafting policies and data classification.

- 3–4 weeks for vendor security assessments.

- 2–3 weeks for pilot deployment.

- 4–8 weeks per tier for gradual rollout 14.

For example, in late 2025, a venture capital firm tested an AI copilot for cap table and investor commitment summaries. By using pseudonymisation, deploying in a private virtual private cloud (VPC) with "do-not-train" flags, and limiting redacted input retention to 90 days, the firm reduced its time-to-first-assessment by 42% without any incidents involving sensitive data 13.

Adopt a zero-retention architecture where data is processed in temporary environments destroyed after each session, rather than relying solely on deletion policies.

"Security is the #1 objection PE firms raise when evaluating AI tools - and for good reason" 14.

In one instance, a £6 billion private equity firm halted an AI initiative in 2025 after discovering that the vendor's "enterprise security" used shared infrastructure. Deal data was processed on the same GPU clusters as competing firms' data 14. Always verify vendor architecture to ensure data is destroyed after each session, rather than relying on vague assurances.

Continuous Monitoring and Feedback Systems

Once AI systems are deployed, continuous monitoring is essential to maintain compliance and performance. Introduce drift detection systems to monitor whether AI output quality deteriorates as real-world data diverges from its training set. Define clear performance thresholds or data refresh intervals that automatically trigger model retraining 11. Ensure you have model versioning and rollback capabilities so you can revert to earlier versions if a new deployment introduces bias or performs poorly.

Schedule a quarterly review to reassess AI tools, explore new options, and update policies in response to regulatory changes 15. For instance, in February 2026, Colorado's Algorithmic Accountability Law required firms to demonstrate ongoing governance and accountability for AI-driven decisions 15. Regular audits should also verify who has access to data used in AI systems, ensuring compliance with privacy laws and internal IT protocols 1. Engaging internal auditors for risk assessments throughout the AI lifecycle can further strengthen governance.

Maintain immutable audit ledgers that log all queries, user IDs, and source document IDs in an append-only format. This provides a clear record of how AI influenced investment decisions and supports regulatory examinations 13. Retain redacted inputs for 90 days and store audit records for 3–7 years to meet financial recordkeeping requirements 13. Additionally, conduct quarterly red-teaming exercises to test for adversarial redaction and perform hallucination checks using contradictory documents 13.

To ensure accuracy and data sensitivity, require explicit partner sign-off for any material investment decision or contract action based on AI-generated analysis 13. This feedback loop ensures AI outputs are carefully reviewed before influencing portfolio decisions.

"AI is evolving from a research accelerator into a continuous intelligence layer embedded across the full institutional diligence workflow" 16.

Conclusion

Generative AI has the potential to streamline private market transactions, but it comes with significant risks. These include the possibility of fabricated outputs, breaches of sensitive data that could violate NDAs, and regulatory non-compliance that might result in fines. Such issues can compromise decision-making, encourage opaque "black box" processes, and harm reputational trust 117.

To mitigate these risks, human oversight remains essential. While AI can handle the heavy lifting by creating a draft that's approximately 70% complete, experts must step in to review and refine the output 17.

The solution lies in adopting tools specifically tailored to the domain. Specialised, enterprise-grade platforms provide a safer alternative to generic AI systems. These tools are designed with M&A-specific conventions in mind, ensure data isolation, and protect sensitive deal information. For example, Axion Lab offers AI-powered due diligence tools that meet strict security standards, including end-to-end encryption, GDPR compliance, zero data retention, and adherence to the EU AI Act. This allows firms to extract early insights without compromising confidentiality.

Industry professionals emphasise this balance between AI and human expertise:

"AI handles production tasks - drafting, summarising, analysing. The core of investment banking - relationships, judgement, negotiation, strategic advice - requires human expertise that AI can't replicate" 17.

The firms that will thrive are those that strategically integrate AI into their processes. By building proprietary knowledge bases from past deals and relying on human judgement for critical decisions, they can achieve a powerful synergy.

Security and governance should not be seen as barriers but as the foundation for leveraging AI effectively. This approach ensures firms can embrace AI's benefits while safeguarding confidentiality, maintaining accuracy, and staying compliant.